Blog:

A new self-serve edge AI platform for building smart on-device solutions

Xnor just released access to hundreds of deep learning models that can be easily embedded in your Toradex board with a couple of lines of code.

AI traditionally depended on expensive hardware running in the cloud, which has dramatically limited its use to just a few large companies. Even with the available tools, building AI products required extensive knowledge in deep learning to design, train, and implement solutions for a host of constraints, including power, memory, and latency rendering development for on-device AI near impossible.

That’s no longer the case.

With AI2GO we’ve consolidated models and inference engines for particular hardware targets into a single Xnor Binary (XB) ready for download. You no longer need to worry about data collection, annotation, training, model architecture or performance optimization.

AI2GO offers more than a hundred fully-trained models, optimized to run on a multitude of resource-constrained devices, able to operate under numerous constraints including memory, power, and latency. Now, with just a few lines of code, XBs are being used to build solutions for retail analytics, smart home, and industrial IoT.

Using AI2GO is simple. Here’s how it works:

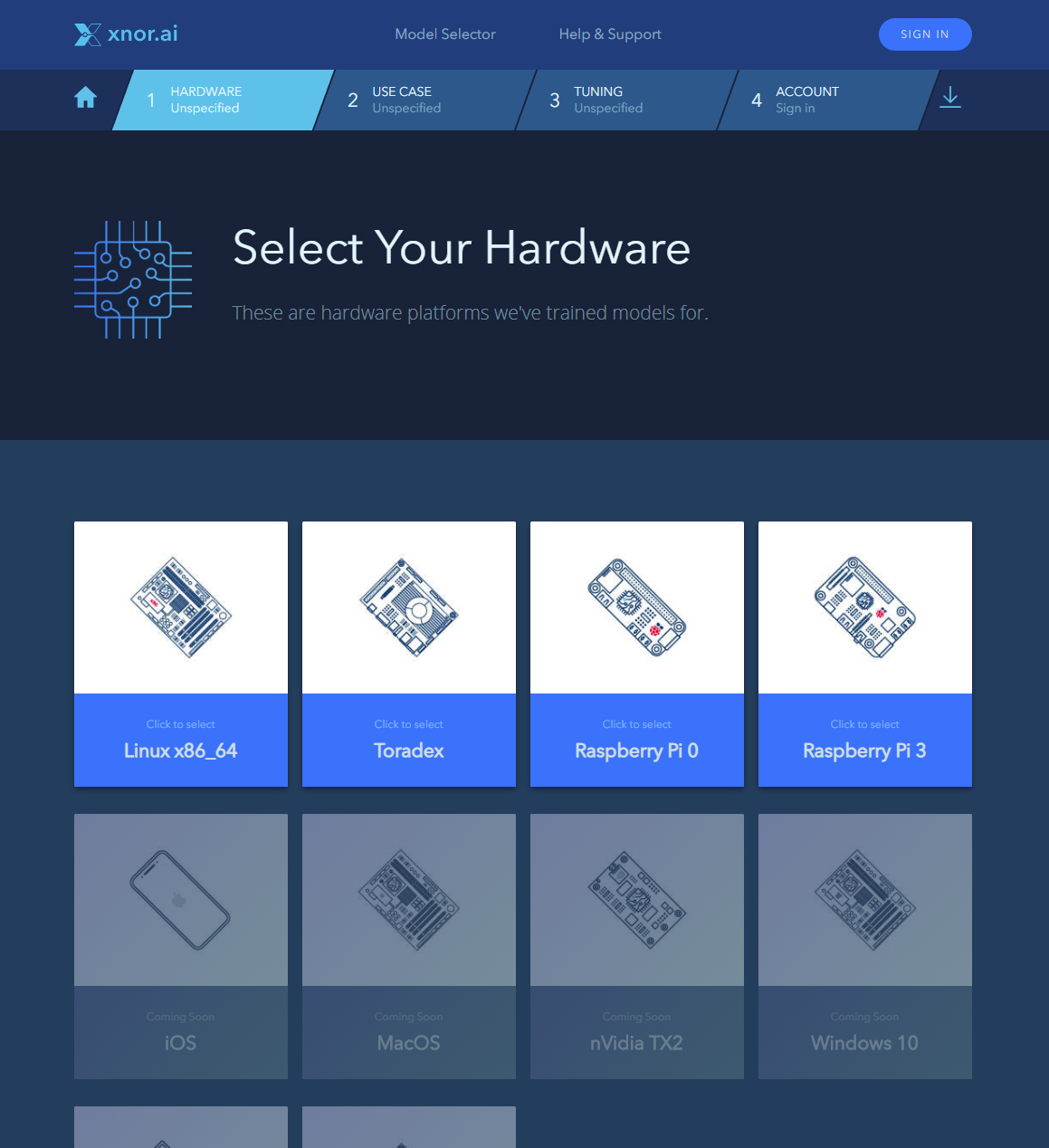

First, select your preferred hardware target (e.g. Toradex)

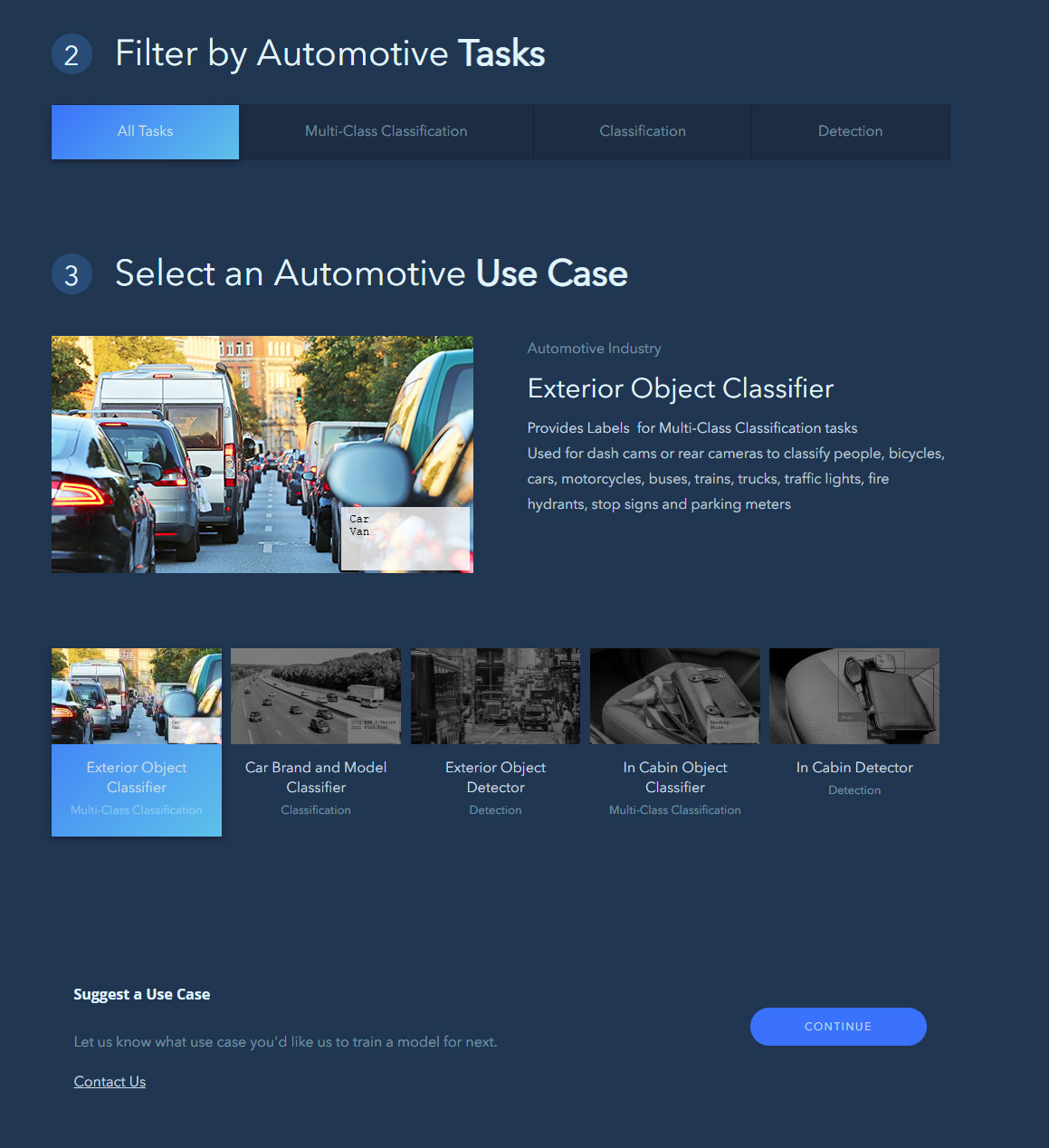

Next select an AI use case, for example a “pet classifier for a home security camera,” a “person detector for a dash cam,” or a “person segmenter for video conferencing applications.”

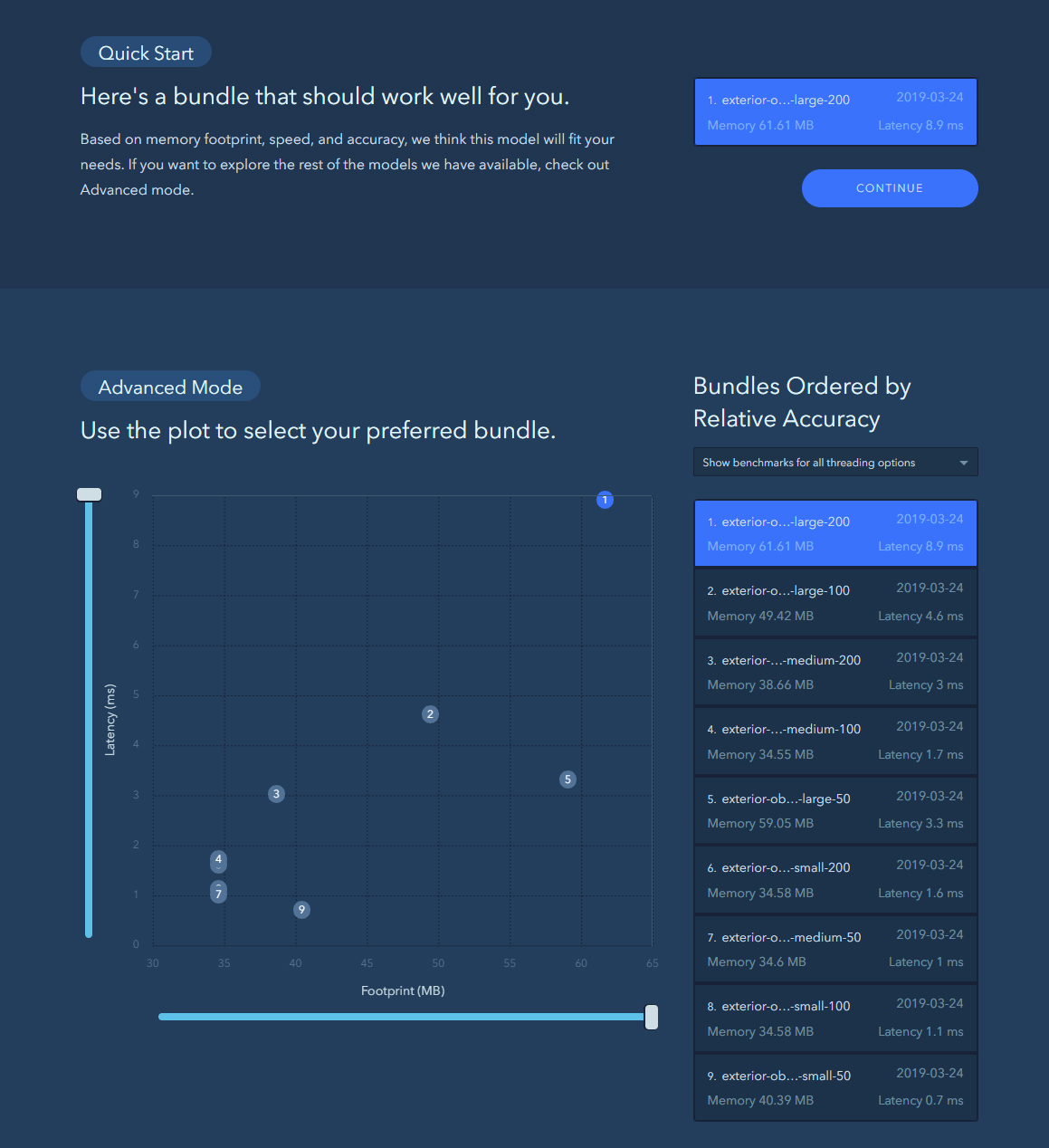

Because AI2GO models are designed to run in resource-constrained environments, Xnor provides you with the novel opportunity to tune your model for latency (milliseconds) and memory footprint (megabytes) in order to fit within your particular set of constraints. Once you've specified your constraints, the available models are listed, ranked by accuracy.

Download an Xnor Bundle (XB), a module containing a deep learning model and an inference engine. Xnor also provides an accompanying SDK that includes access to code samples, demo applications, benchmarking tools, and technical documentation that makes it simple for anyone to start building smart applications.

Xnor.ai provides technology solutions for artificial intelligence and deep learning applications. It has implemented edge AI tasks on resource-constrained and low-power hardware, object-detection models running real-time on three cameras using Toradex’s efficient Apalis iMX8 System on Module (SoM) based on NXP® i.MX 8QuadMax SoC, and using the Ixora carrier board.

It will also work on much more resource-constrained products such as the Colibri iMX6ULL, Colibri iMX6, Colibri iMX7, and Colibri iMX8X.

Xnor.ai provides services to help you solve your machine learning problems in a way that it is optimized to run on Toradex SoMs. Many problems that require a high-power PC or NVIDIA® TX1 or TX2 Jetson module, can be solved with more cost- and power-effective hardware.

Featuring the Toradex Apalis iMX8 System on Module (SoM), this demo showcases Xnor object-detection models running real-time on three cameras.

Together, Xnor and Toradex are enabling AI on edge devices where technology and the real world intersect, providing a fast, resilient, and autonomous solution that works without interruption. This collaboration unlocks usage scenarios requiring edge AI tasks on resource-constrained and low-power hardware which were previously only available in the cloud or on specialized hardware.

- Xnor - Toradex partnership page

- AI2GO getting started tutorial

- Post questions on Xnor’s AI2GO forum

- Contact Xnor sales

- Xnor will demo the technology and on-board new users at the Embedded Vision Summit, booth #419, in California on May 20 - 2+3.

- Xnor’s AI2GO serves up custom edge AI models with a few clicks - TechCrunch

- Xnor releases AI2GO, a do-it-yourself software platform for artificial intelligence - Geekwire