Blog:

Is Torizon OTA Safe From Power Loss?

In a broad context, software updates are not something new. Package managers, OS update mechanisms implemented by OS vendors and manual updates are present in the world of desktops and servers since long ago. Nevertheless, it has not been the case for embedded systems until recently.

Embedded systems cannot reliably use the traditional desktop and server update mechanisms, though. There are specific challenges such as unattended updates, intermittent connectivity, consistent updates across a fleet of devices, among others. One of those concerns is an unexpected power cut, the main topic to be discussed in this article.

To address those concerns, with the growing number of connected devices largely due to the rise of the Internet of Things, the term Over-the-Air Updates (OTA) has been coined. Toradex focuses on making embedded computing easy, therefore we have created TorizonCore, an easy-to-use industrial Linux software platform, and one of its services is the Torizon remote updates, a fully integrated solution that allows updating applications and the TorizonCore OS out-of-the-box.

Many of our customers are curious about the reliability of the Torizon remote updates in scenarios where power-cut is likely to happen. In this blog post, we address this question by looking under the hood of the client-side of Torizon OTA, the one that runs on the embedded system and that is composed by the OSTree technology, combined with others as Aktualizr and Greenboot.

The information presented in this blog is based on the OSTree documentation about atomic upgrades, a mailing list discussion on the ostree-list gnome org, quick peeks at the OSTree source code and some experimentation.

Before we dive into the power-cut topic, let’s briefly recap what is OSTree, just in case you never heard about it. According to the project page:

libostree is both a shared library and suite of command line tools that combines a “git-like” model for committing and downloading bootable filesystem trees, along with a layer for deploying them and managing the bootloader configuration.

Let’s break down some of those concepts and add a few more details. The first thing that is important to notice is that OSTree requires a filesystem and, due to the way it works, the support of hardlinks. It means that practically any Linux filesystem is supported.

The “git-like“ mention means that OSTree can track filesystem trees on a per-file basis. It keeps different trees on refs, similar to Git branches, and users can make changes to the filesystem and commit them, or alternatively check out to an existing commit. This is the base for the OTA update mechanisms.

There is much more to OSTree than simply replacing files. It is possible to store bootloader configuration in accordance to the Boot Loader Specification; have persistent data across updates on /var and /home which must be managed by the operating system if any updates are desirable and; a 3-way merge mechanism for /etc in which local changes are applied to new updates.

On the Torizon remote updates, OSTree is used together with another project named Aktualizr and it is transparent to the Torizon remote updates users through most of the process. You may come across the name, but it is unlikely that you will need to manually use it on a low level. Still, if you do want to see how does it look like manually working with OSTree, check out our article about it.

- Aktualizr is a “daemon-like“ implementation of the Uptane SOTA standard. It manages secure updates from end-to-end, from the servers to the board. It uses OSTree for this purpose.

- TorizonCore always keeps two OSTree references after an update. This allows the system to roll back to a known working state if the new updates prevent the system from booting.

- The rollback logic is implemented by Aktualizr, while making use of OSTree. If the system does not boot due to a kernel error or a critical failure on a userspace operation, the rollback mechanism will activate.

This is a very compact pick of highlights. To learn more about the Torizon remote updates implementation, read our article Torizon OTA Technical Overview.

From the ground up, OSTree is designed to provide atomic upgrades. In a nutshell, it means that if an upgrade is interrupted in the middle of the process – either from a system crash or a power-cut – the system will either keep its previous state or the new one. There will be no middle ground and you won’t end up with corrupted files. The implementation consists of using a symbolic link to swap between deployments and you can learn more on Atomic Upgrades.

This upgrade system assumes that there are two OSTree deployments already available on the board. If so, then just the switching from deployment A to deployment B occurs. But how does it handle cases when a deployment is being fetched and bad things happen? After all, files can either get corrupted or missing.

- Download of a new OSTree ref

- Creating the deployment

During the download of a new OSTree ref, files are staged in a temporary directory per kernel “boot ID” that is uniquely generated on every boot. The staging process consists of writing the objects into the staging directory, then calling fsync() on those objects to guarantee they are written and will be available even after a system crash. You can inspect the source code, especially ostree-repo-commit.c, for implementation details and insightful comments.

If a power-cut happens while the staging process is ongoing, the “boot ID” will be invalid and all objects in the staging directory will be discarded, including valid ones. Notice that this approach is a bit conservative since all previously downloaded content is lost and OSTree doesn’t try to resume it.

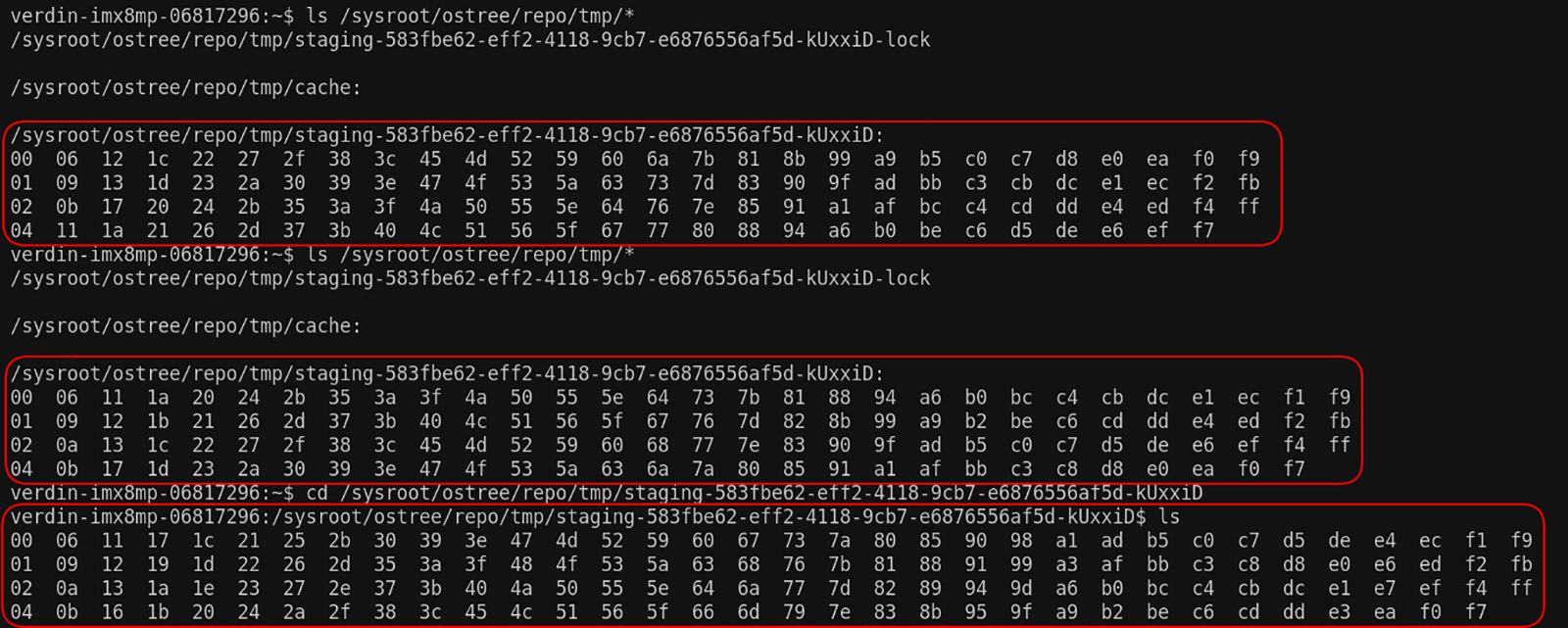

In practice, how does it looks like? The files are staged under /sysroot/ostree/repo/tmp/staging-<boot-ID>

Then try to power-cut and you will see that the update does not resume automatically, even though the directory is still there until OSTree prune logic deletes it.

Start a new update and notice that a new staging-<boot ID>

Once this process is finished, the objects will be available on /sysroot/ostree/repo/objects/.

If you want to do it yourself, follow the instructions in Appendix I.

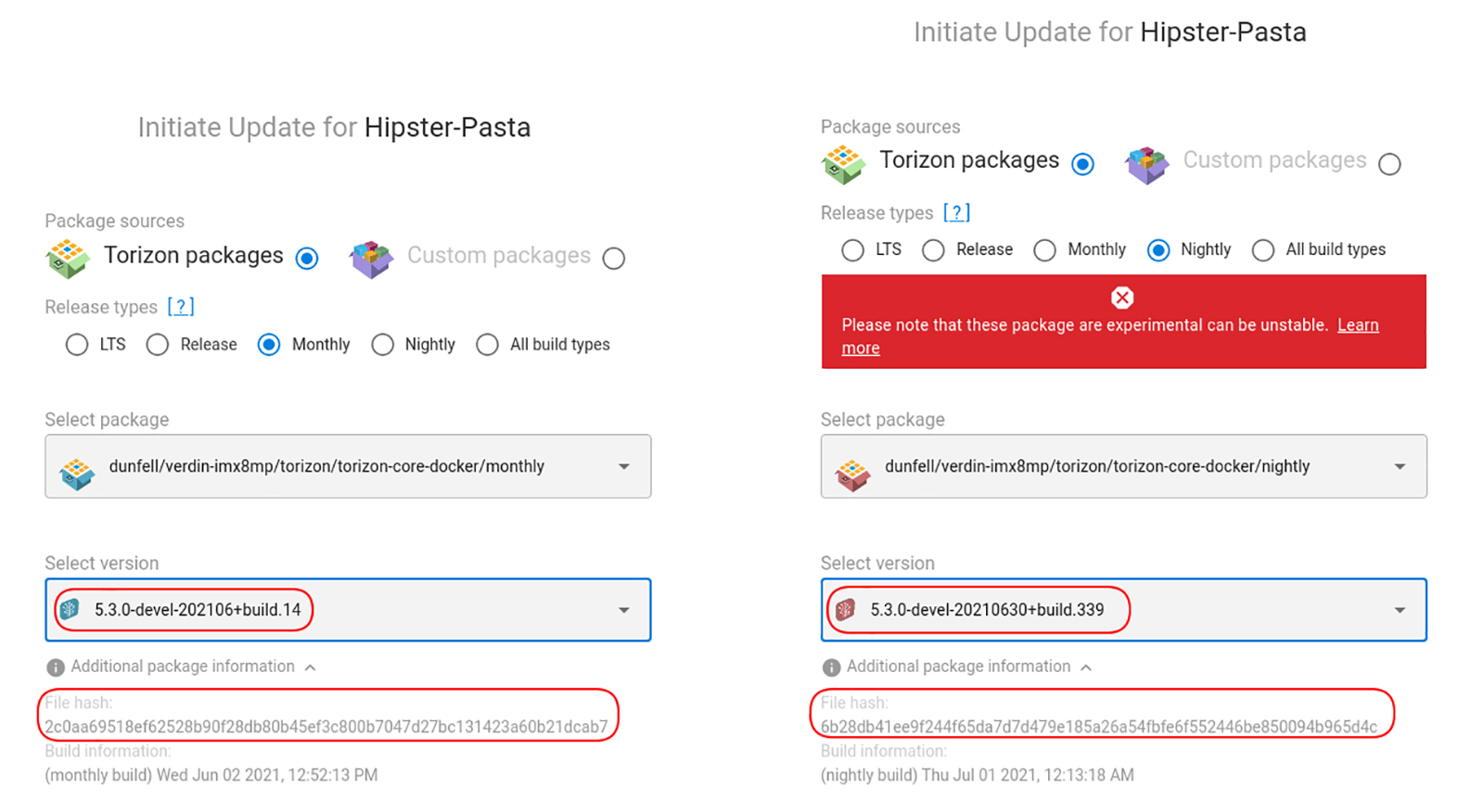

During the deployment, a hardlink farm is created and forward changes are copied into /etc. If the system crashes, on the next upgrade attempt the hardlink farm will be recreated after garbage collection.

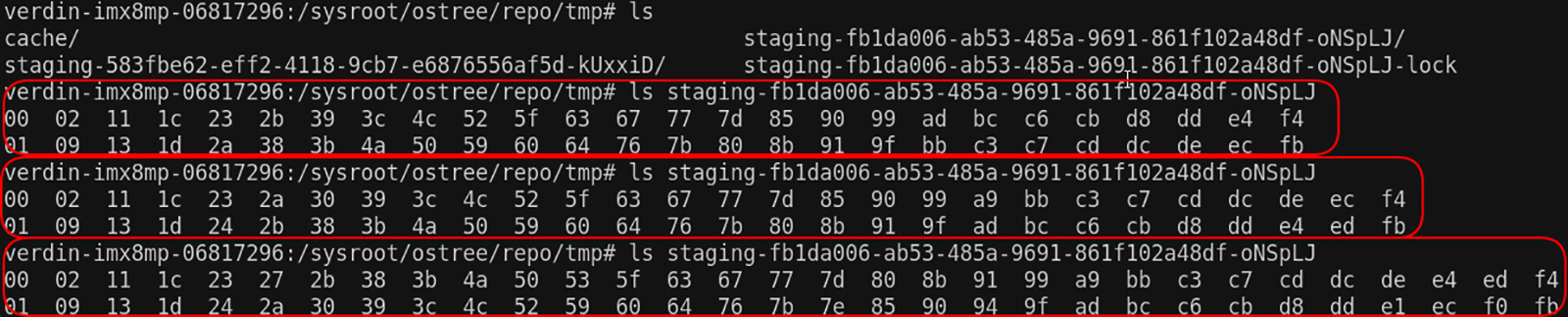

- 5.3.0-devel-202106+build.14 (hash 2c0aa6951…)

- 5.3.0-devel-20210630+build.339 (hash 6b28db41…)

If you install version (1) and then update to version (2), you can check that each version has a corresponding entry on /sysroot/ostree/deploy/torizon/deploy/<commit hash>

verdin-imx8mp-06817296:/usr/bin# ls /sysroot/ostree/deploy/torizon/deploy/ 2c0aa69518ef62528b90f28db80b45ef3c800b7047d27bc131423a60b21dcab7.0 2c0aa69518ef62528b90f28db80b45ef3c800b7047d27bc131423a60b21dcab7.0.origin 6b28db41ee9f244f65da7d7d479e185a26a54fbfe6f552446be850094b965d4c.0 6b28db41ee9f244f65da7d7d479e185a26a54fbfe6f552446be850094b965d4c.0.origin

Between those versions, some files have changed while others didn’t. We can use the OSTree command-line tool together with grep to list the modified files on /usr/bin:

verdin-imx8mp-06817296:/usr/bin# ostree diff ostree/1/1/0 ostree/1/1/1 | grep -e "/usr/bin"

M /usr/bin/containerd M /usr/bin/containerd-ctr M /usr/bin/containerd-shim M /usr/bin/docker M /usr/bin/docker-proxy M /usr/bin/dockerd M /usr/bin/runc

Let’s take two files as examples and find out the inode numbers. Why inode numbers? Because OSTree uses hardlinks and hardlinks point to the same inode:

- /usr/bin/docker changed, as we saw in the previous command.

- /usr/bin/ostree remained the same.

verdin-imx8mp-06817296:/usr/bin# ls -i /usr/bin/docker 537746 /usr/bin/docker verdin-imx8mp-06817296:/usr/bin# ls -i /usr/bin/ostree 526540 /usr/bin/ostree

Then we can search for their inodes. As a reminder 2c0aa6951… is the old deployment hash and 6b28db41… is the new one:

# /usr/bin/docker - new file verdin-imx8mp-06817296:/usr/bin# find /sysroot/ostree/ -inum 537746 /sysroot/ostree/repo/objects/70/6f84a3f7b8231c23403340c13e952c4338d29aafed90952fe5307241ff3d14.file /sysroot/ostree/deploy/torizon/deploy/6b28db41ee9f244f65da7d7d479e185a26a54fbfe6f552446be850094b965d4c.0/usr/bin/docker # /usr/bin/docker - old file inode verdin-imx8mp-06817296:/usr/bin# ls -i /sysroot/ostree/deploy/torizon/deploy/2c0aa69518ef62528b90f28db80b45ef3c800b7047d27bc131423a60b21dcab7.0/usr/bin/docker 527069 /sysroot/ostree/deploy/torizon/deploy/2c0aa69518ef62528b90f28db80b45ef3c800b7047d27bc131423a60b21dcab7.0/usr/bin/docker # /usr/bin/ostree verdin-imx8mp-06817296:/usr/bin# find /sysroot/ostree/ -inum 526540 /sysroot/ostree/repo/objects/a4/65bb7b3212965f151344a33910c4d0762ef55902bb5e76d64af2a90e0cee1d.file /sysroot/ostree/deploy/torizon/deploy/2c0aa69518ef62528b90f28db80b45ef3c800b7047d27bc131423a60b21dcab7.0/usr/bin/ostree /sysroot/ostree/deploy/torizon/deploy/6b28db41ee9f244f65da7d7d479e185a26a54fbfe6f552446be850094b965d4c.0/usr/bin/ostree

Notice that the unchanged file /usr/bin/ostree has two entries under /sysroot/ostree/deploy/torizon/deploy/<commit hash>

As presented, we can conclude that OSTree does its due diligence in assuring that the system is always in a working state and can recover from crashes. The combination of techniques such as the staging area while doing downloads, the use of fsync, and the hardlink farms guarantee that you will always have a working board, even on unexpected power loss. If you like to understand how all of this is done, you can refer to the source-code.

Remember that all of this is transparent to the users. To check out how things work from a user perspective, learn more about how to get started with Torizon remote updates.

First, install and setup TorizonCore Builder on your PC:

wget https://raw.githubusercontent.com/toradex/tcb-env-setup/master/tcb-env-setup.sh source tcb-env-setup.sh

Then, create a TorizonCore Builder template:

torizoncore-builder build --create-template --file - > tcbuild.yaml

- input: an input image that is compatible with your computer on module.

- customization: a filesystem changes directory

- output: an OSTree deployment

See the file below for reference. You can replace the template content with this one and make your own tweaks:

input:

easy-installer:

# >> (3) Image specification (URL will be generated by the tool)

toradex-feed:

version: "5.3.0"

release: nightly

machine: verdin-imx8mp

distro: torizon

variant: torizon-core-docker

build-number: "339"

build-date: "20210630"

customization:

# >> Directories overlayed to the base OSTree

filesystem:

- changes/

output:

# >> OSTree deployment configuration (relevant also for Easy Installer output).

ostree:

branch: my-dev-branch

commit-subject: "Add Large Files"

commit-body: "Add several 10MB files, for a total of 5 GB"

Next, on the board, create a dummy file under /etc:

sudo sh -c "echo "hello" > /etc/hello.txt"

Back to your PC, capture the board configuration - which is basically the file you have just created - into a directory named changes:

torizoncore-builder isolate --remote-host 192.168.20.19 --remote-username torizon --remote-password 1234 --changes-directory changes

Add some large files to be deployed. You can use the following commands, which will create 50 files with 10MB each:

mkdir changes/large-files i=0; until [ $i -gt 50 ]; do dd if=/dev/urandom of=changes/large-files/file$i.bin bs=1024 count=10240 && let "i=i+1"; done

Generate the new OSTree commit with the dummy files:

torizoncore-builder build

Deploy it to the Torizon update server:

torizoncore-builder push --credentials credentials.zip my-dev-branch

Through the Torizon web interface, you can provision your device and deploy the update.

Leonardo Veiga, Technical Marketing Lead, Toradex

Leonardo Veiga, Technical Marketing Lead, Toradex